SciML starter tutorial: Universal Differential Equations (UDEs)

Hybrid scientific machine learning (SciML) combines mechanistic (ODE) models with machine learning (ML) models [20]. PEtab.jl supports three SciML problem types, and any combination of them:

ML model inside the ODE dynamics. This covers both Universal Differential Equations (UDEs), also called grey-box and hybrid neural ODEs [21, 22], as well as neural ODEs (NODEs) [23].

ML model in the observable formula which links ODE output to measurement data.

Pre-simulation ML model, where the ML model is evaluated before simulation to map input data (e.g. high-dimensional images) to ODE parameters and/or initial conditions.

This tutorial introduces SciML functionality in PEtab.jl using the UDE case. Other tutorials in this section cover the remaining cases. Familiarity with setting up a PEtab problem for mechanistic models is assumed; see the PEtab.jl starting tutorial.

While this tutorial focuses on a simple use-case, note that SciML support is implemented on top of the functionality for mechanistic models. Thus, features for mechanistic models (e.g. simulation conditions and events) are also supported for SciML problems.

Input problem

As a running example, we use the following two-state ODE model:

with initial conditions

To turn this into a UDE [20], we assume the production term for

Where

To estimate model parameters, measurements of both d and the parameters of NN.

Creating a PEtab SciML problem

A PEtab SciML parameter estimation problem (PEtabODEProblem) is created largely the same way as for a mechanistic model. The difference is that one or more Lux.jl neural networks are defined and embedded into the problem.

Defining the ML model

PEtab.jl only supports Lux.jl ML models. To be compatible, the Lux model must define a set of layers with unique identifiers together with a forward pass. This is most easily done using Lux.Chain:

using Lux

lux_model = Lux.Chain(

Lux.Dense(1 => 3, Lux.softplus, use_bias = false),

Lux.Dense(3 => 3, Lux.softplus, use_bias = false),

Lux.Dense(3 => 1, Lux.softplus, use_bias = false),

)Chain(

layer_1 = Dense(1 => 3, softplus, use_bias=false), # 3 parameters

layer_2 = Dense(3 => 3, softplus, use_bias=false), # 9 parameters

layer_3 = Dense(3 => 1, softplus, use_bias=false), # 3 parameters

) # Total: 15 parameters,

# plus 0 states.Here, softplus is used to keep the output positive.

Defining the dynamic UDE model

An UDE dynamic model is most easily created using ModelingToolkitNeuralNets. The first step is to create a symbolic neural network from the Lux model:

using ModelingToolkitNeuralNets

@SymbolicNeuralNetwork NN, theta = lux_modelHere theta is the network parameter vector, and NN is the neural network which can be embedded into a ModelingToolkit model:

using ModelingToolkitBase

using ModelingToolkitBase: t_nounits as t, D_nounits as D

@parameters d

@variables X(t)=2.0 Y(t)=0.1

eqs = [

D(X) ~ NN([Y], theta)[1] - d * X

D(Y) ~ X - d * Y

]

@mtkcompile sys_ude = System(eqs, t)Alternatively, the UDE can be formulated as a Catalyst ReactionSystem:

using Catalyst

# Scalar NN rate depending on Y.

A(z) = NN([z], theta)

rn_ude = @reaction_network begin

@species begin

X(t) = 2.0

Y(t) = 0.1

end

@parameters d

$A(Y)[1], 0 --> X

d, X --> 0

1.0, X --> X + Y

d, Y --> 0

endWhen embedding a neural network into a ReactionSystem, it must be introduced via a symbolic function (here A(z)) and can only be used in the parameter position of a reaction.

For more on symbolic UDE creation, see the ModelingToolkitNeuralNets documentation.

Defining ML parameters to estimate

For each ML-model, a PEtabMLParameter must be declared to specify whether its parameters are estimated. Thereby, when specifying the parameters-to-estimate vector for a SciML problem, both mechanistic parameters (PEtabParameter) and ML parameters (PEtabMLParameter) must be included in the parameter vector:

using PEtab

pest = [

PEtabMLParameter(:theta),

PEtabParameter(:d; scale = :log10)

]2-element Vector{Any}:

PEtabMLParameter theta: estimate

PEtabParameter d: estimate (scale = log10, bounds = [1.0e-03, 1.0e+03])A PEtabMLParameter can optionally be given an initial value, priors, and be fixed to specific value. Note, parameter d is estimated on log10 scale to enforce positivity.

Measurements and observables

Measurements and observables are defined as for a mechanistic PEtabODEProblem. For the running example, we here use simulated data:

using OrdinaryDiffEqTsit5, DataFrames, StableRNGs

rng = StableRNGs.StableRNG(2) # for reproducibility

function f_true!(du, u, p, t)

X, Y = u

v, K, n, d = p

du[1] = (v * Y^n) / (Y^n + K^n) - d * X

du[2] = X - d * Y

end

u0_true = [2.0, 0.1]

p_true = [1.1, 2.0, 3.0, 0.5]

tend = 44.0

ode_true = ODEProblem(f_true!, u0_true, (0.0, tend), p_true)

sol = solve(

ode_true, Tsit5(); abstol = 1e-8, reltol = 1e-8, saveat = 0:2:tend

)

data_X = sol[1, :] .+ randn(rng, length(sol.t)) .* 0.5

data_Y = sol[2, :] .+ randn(rng, length(sol.t)) .* 0.7

df1 = DataFrame(obs_id = "obs_X", time = sol.t, measurement = data_X)

df2 = DataFrame(obs_id = "obs_Y", time = sol.t, measurement = data_Y)

measurements = vcat(df1, df2)Since both X and Y are assumed measured, the observables are:

observables = [

PEtabObservable(:obs_X, :X, 0.5),

PEtabObservable(:obs_Y, :Y, 0.7),

]2-element Vector{PEtabObservable}:

PEtabObservable obs_X: data ~ Normal(μ=X, σ=0.5)

PEtabObservable obs_Y: data ~ Normal(μ=Y, σ=0.7)Bringing it all together

Given the dynamic model (sys_ude or rn_ude), measurements, observables, and parameters to estimate, a PEtabModel is created the same way as for a mechanistic problem:

# Via ReactionSystem

model_ude = PEtabModel(rn_ude, observables, measurements, pest)

# Via ODESystem

model_ude = PEtabModel(sys_ude, observables, measurements, pest)Given a PEtabModel, a PEtabODEProblem can then be constructed:

petab_prob = PEtabODEProblem(model_ude)

describe(petab_prob)PEtabODEProblem ODESystemModel

Problem statistics

Parameters to estimate: 16

ODE: 2 states, 16 parameters

Observables: 2

Simulation conditions: 1

ML models

theta: (mode=simulation, parameters=15)

Configuration

Gradient method: ForwardDiff

Hessian method: ForwardDiff

ODE solver (nllh): Tsit5 (abstol=1.0e-08, reltol=1.0e-08, maxiters=1e+04)

ODE solver (grad): Tsit5 (abstol=1.0e-08, reltol=1.0e-08, maxiters=1e+04)As seen from the problem summary, the problem includes both mechanistic and ML parameters to estimate. It is further seen the non-stiff ODE solver Tsit5() is used and gradients are computed with ForwardDiff. These options can be changed, and the same PEtabODEProblem options are supported as for mechanistic models.

Parameter estimation (model training)

SciML problems can be trained using the same approaches as mechanistic models (e.g. multi-start local optimization with a BFGS-based method via calibrate). In practice, SciML training often benefits from optimizers commonly used for ML [24], such as the Adam optimizer [25].

Here, we will set up a training loop with Adam from Optimisers.jl. Like most optimizers, Adam requires a start guess, which can be generated with:

rng = StableRNG(42) # for reproducibility

x0 = get_startguesses(rng, petab_prob, 1)ComponentVector{Float64}(log10_d = 0.48308917611117286, theta = (layer_1 = (weight = [-0.038298919796943665; 0.8650590777397156; 0.7111778855323792;;]), layer_2 = (weight = [0.9691276550292969 0.11354947090148926 -0.64198899269104; -0.9428482055664062 0.9947323799133301 0.7659111022949219; 0.8692448139190674 0.7045483589172363 0.3672807216644287]), layer_3 = (weight = [0.9935319423675537 -0.6066112518310547 -0.90108323097229])))The start guess includes both mechanistic parameters (e.g. x0.d) and ML parameters (e.g. x0.theta). By default, get_startguesses initializes ML parameters with the initializers in the Lux model. While not needed here, SciML training often improve by initializing ML parameters to smaller values [26].

With a start guess available, a training loop can be written leveraging that the objective function and its gradient can be computed with petab_prob.nllh(x) and petab_prob.grad(x) respectively. For example, to train for 5000 epochs with Adam:

using Optimisers

n_epochs = 7500

x = deepcopy(x0)

learning_rate = 1e-3

state = Optimisers.setup(Adam(learning_rate), x)

for epoch in 1:n_epochs

g = petab_prob.grad(x)

state, x = Optimisers.update(state, x, g)

# Stop if the objective cannot be evaluated (e.g. simulation failure)

if !isfinite(petab_prob.nllh(x))

break

end

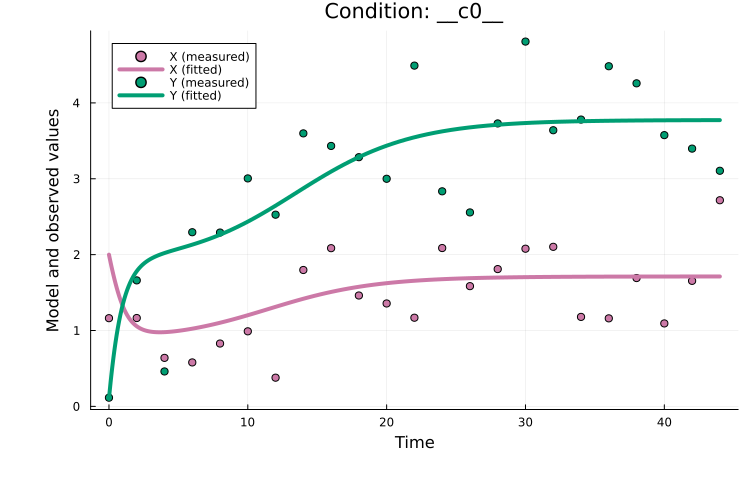

endFrom plotting the fitted model, we see the model fits the data well:

using Plots

plot(x, petab_prob)

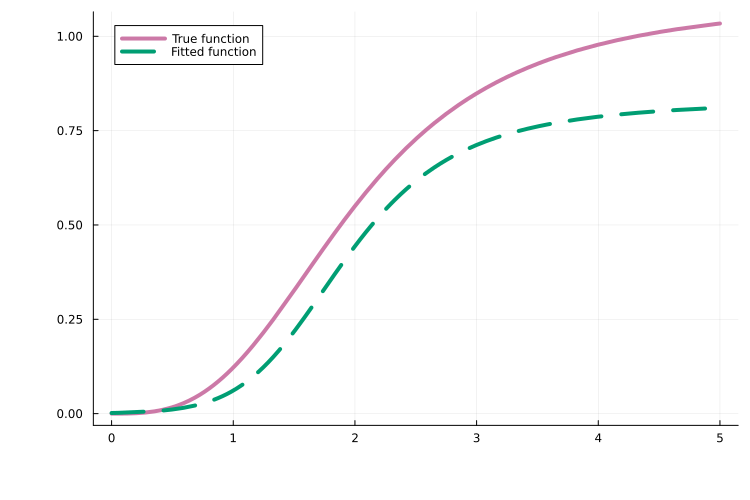

As one of many possible downstream analyses, we can compare the learned neural network to the true function it aims to approximate. In this example, the approximation is quite good:

# True function to learn

true_func(y) = 1.1 * (y^3) / (2^3 + y^3)

# Extract the fitted neural network and parameters from the fitted ODEProblem

ode_problem_fitted, _ = get_odeproblem(x, petab_prob)

fitted_NN = ode_problem_fitted.ps[NN]

fitted_theta = ode_problem_fitted.ps[theta]

fitted_func(y) = fitted_NN([y], fitted_theta)[1]

# Plot true vs fitted

plot(true_func, 0.0, 5.0; label = "True function")

plot!(fitted_func, 0.0, 5.0; label = "Fitted function", linestyle = :dash)

The training loop above is intentionally minimal. In practice, Optimisers.jl and related packages can be used to add learning-rate schedules, gradient clipping, early stopping, and logging. It is also worth noting that plain Adam is often inefficient for SciML problems, and to address this PEtab.jl supports more effective strategies such as curriculum training, multiple shooting, and combinations thereof (see below).

Next steps

This tutorial showed how to define a PEtabODEProblem for a SciML problem. For all available options when building SciML problems (e.g. PEtabMLParameter settings), see the API documentation. For additional features, including SciML problem types beyond UDEs, see the following tutorials:

Extended UDE tutorial: Define the UDE model as an

ODEProblemfor more control over the problem setup.ML models in observables: define an ML model in the observable formula of a

PEtabObservable(e.g. to correct model misspecification).Pre-simulation ML models: define ML models that map input data (e.g. high-dimensional images) to ODE parameters or initial conditions prior to model simulation.

Importing PEtab SciML: import problems in the PEtab-SciML standard format.

In addition, this tutorial showed how to train a UDE via a simple Adam training loop. More efficient training strategies are supported via PEtabTraining.jl (e.g. curriculum learning and multiple shooting); see SciML training strategies.

Lastly, as for mechanistic models, PEtabODEProblem has many configurable options for SciML problems. A discussion of defaults and recommendations is available in Default PEtabODEProblem options.

Copy pasteable example

using Catalyst, ComponentArrays, Lux, ModelingToolkitBase,

ModelingToolkitNeuralNets, PEtab

using ModelingToolkitBase: t_nounits as t, D_nounits as D

# MLModel

lux_model = Lux.Chain(

Lux.Dense(1 => 3, Lux.softplus, use_bias = false),

Lux.Dense(3 => 3, Lux.softplus, use_bias = false),

Lux.Dense(3 => 1, Lux.softplus, use_bias = false),

)

# UDE-system

@SymbolicNeuralNetwork NN, theta = lux_model

@parameters d

@variables X(t)=2.0 Y(t)=0.1

eqs = [

# Equations

D(X) ~ NN([Y], theta)[1] - d * X

D(Y) ~ X - d * Y

]

@mtkcompile sys_ude = System(eqs, t)

# UDE-system via ReactionSystem

using Catalyst

# Scalar NN rate depending on Y.

A(z) = NN([z], theta)

rn_ude = @reaction_network begin

@species begin

X(t) = 2.0

Y(t) = 0.1

end

@parameters d

$A(Y)[1], 0 --> X

d, X --> 0

1.0, X --> X + Y

d, Y --> 0

end

# Parameters to estimate

pest = [

PEtabMLParameter(:theta),

PEtabParameter(:d; scale = :log10)

]

# Observables linking model output to data

observables = [

PEtabObservable(:obs_X, :X, 0.5),

PEtabObservable(:obs_Y, :Y, 0.7),

]

# Simulate data

using OrdinaryDiffEqTsit5, DataFrames, StableRNGs

rng = StableRNGs.StableRNG(2)

function f_true!(du, u, p, t)

X, Y = u

v, K, n, d = p

du[1] = (v * Y^n) / (Y^n + K^n) - d * X

du[2] = X - d * Y

end

u0_true = [2.0, 0.1]

p_true = [1.1, 2.0, 3.0, 0.5]

tend = 44.0

ode_true = ODEProblem(f_true!, u0_true, (0.0, tend), p_true)

sol = solve(

ode_true, Tsit5(); abstol = 1e-8, reltol = 1e-8, saveat = 0:2:tend

)

data_X = sol[1, :] .+ randn(rng, length(sol.t)) .* 0.5

data_Y = sol[2, :] .+ randn(rng, length(sol.t)) .* 0.7

df1 = DataFrame(obs_id = "obs_X", time = sol.t, measurement = data_X)

df2 = DataFrame(obs_id = "obs_Y", time = sol.t, measurement = data_Y)

measurements = vcat(df1, df2)

# Create parameter estimation problem

model_ude = PEtabModel(sys_ude, observables, measurements, pest)

petab_prob = PEtabODEProblem(model_ude)

# Get random start-guess for model training

rng = StableRNG(42) # for reproducibility

x0 = get_startguesses(rng, petab_prob, 1)

# Simple Adam training loop

using Optimisers, Plots

n_epochs = 7500

x = deepcopy(x0)

learning_rate = 1e-3

state = Optimisers.setup(Adam(learning_rate), x)

for epoch in 1:n_epochs

g = petab_prob.grad(x)

state, x = Optimisers.update(state, x, g)

# Stop if the objective cannot be evaluated (e.g. simulation failure)

if !isfinite(petab_prob.nllh(x))

break

end

end

plot(x, petab_prob)

# True function to learn

true_func(y) = 1.1 * (y^3) / (2^3 + y^3)

# Fitted neural network

ode_problem_fitted, _ = get_odeproblem(x, petab_prob)

fitted_NN = ode_problem_fitted.ps[NN]

fitted_theta = ode_problem_fitted.ps[theta]

fitted_func(y) = fitted_NN(y, fitted_theta)[1]

# Plots the true and fitted functions

plot(true_func, 0.0, 5.0; lw=8, label="True function")

plot!(fitted_func, 0.0, 5.0; lw=6, label="Fitted function", linestyle=:dash)References

C. Rackauckas, Y. Ma, J. Martensen, C. Warner, K. Zubov, R. Supekar, D. Skinner, A. Ramadhan and A. Edelman. Universal differential equations for scientific machine learning, arXiv preprint arXiv:2001.04385 (2020).

J. Brucker, R. Behmann, W. G. Bessler and R. Gasper. Neural ordinary differential equations for grey-box modelling of lithium-ion batteries on the basis of an equivalent circuit model. Energies 15, 2661 (2022).

B. J. Zou, M. E. Levine, D. P. Zaharieva, R. Johari and E. B. Fox. Hybrid2 neural ODE causal modeling and an application to glycemic response. In: Proceedings of the 41st International Conference on Machine Learning, Vol. 235 of ICML'24 (JMLR.org, Vienna, Austria, Jul 2024); pp. 62934–62963. Accessed on Apr 20, 2026.

R. T. Chen, Y. Rubanova, J. Bettencourt and D. K. Duvenaud. Neural ordinary differential equations. Advances in neural information processing systems 31 (2018).

M. Philipps, N. Schmid and J. Hasenauer. Current state and open problems in universal differential equations for systems biology, npj Systems Biology and Applications 11, 101 (2025).

D. P. Kingma and J. Ba. Adam: A method for stochastic optimization, arXiv preprint arXiv:1412.6980 (2014).

P. Kidger. On neural differential equations, arXiv preprint arXiv:2202.02435 (2022).